Wrap Your Mind Around Neural Networks

- by 7wData

Artificial Intelligence is playing an ever increasing role in the lives of civilized nations, though most citizens probably don’t realize it. It’s now commonplace to speak with a computer when calling a business. Facebook is becoming scary accurate at recognizing faces in uploaded photos. Physical interaction with smart phones is becoming a thing of the past… with Apple’s Siri and Google Speech, it’s slowly but surely becoming easier to simply talk to your phone and tell it what to do than typing or touching an icon. Try this if you haven’t before — if you have an Android phone, say “OK Google”, followed by “Lumos”. It’s magic!

Advertisements for products we’re interested in pop up on our social media accounts as if something is reading our minds. Truth is, something is reading our minds… though it’s hard to pin down exactly what that something is. An advertisement might pop up for something that we want, even though we never realized we wanted it until we see it. This is not coincidental, but stems from an AI algorithm.

At the heart of many of these AI applications lies a process known as Deep Learning. There has been a lot of talk about Deep Learning lately, not only here on Hackaday, but all over the interwebs. And like most things related to AI, it can be a bit complicated and difficult to understand without a strong background in computer science.

If you’re familiar with my quantum theory articles, you’ll know that I like to take complicated subjects, strip away the complication the best I can and explain it in a way that anyone can understand. It is the goal of this article to apply a similar approach to this idea of Deep Learning. If neural networks make you cross-eyed and Machine Learning gives you nightmares, read on. You’ll see that “Deep Learning” sounds like a daunting subject, but is really just a $20 term used to describe something whose underpinnings are relatively simple.

When we program a machine to perform a task, we write the instructions and the machine performs them. For example, LED on… LED off… there is no need for the machine to know the expected outcome after it has completed the instructions. There is no reason for the machine to know if the LED is on or off. It just does what you told it to do. With Machine Learning, this process is flipped. We tell the machine the outcome we want, and the machine ‘learns’ the instructions to get there. There are several ways to do this, but let us focus on an easy example:

If I were to ask you to make a little robot that can guide itself to a target, a simple way to do this would be to put the robot and target on an XY Cartesian plane, and then program the robot to go so many units on the X axis, and then so many units on the Y axis. This straightforward method has the robot simply carrying out instructions, without actually knowing where the target is. It works only when you know the coordinates for the starting point and target. If either changes, this approach would not work.

Machine Learning allows us to deal with changing coordinates. We tell our robot to find the target, and let it figure out, or learn, its own instructions to get there. One way to do this is have the robot find the distance to the target, and then move in a random direction. Recalculate the distance, move back to where it started and record the distance measurement. Repeating this process will give us several distance measurements after moving from a fixed coordinate. After X amount of measurements are taken, the robot will move in the direction where the distance to the target is shortest, and repeat the sequence. This will eventually allow it to reach the target. In short, the robot is simply using trial-and-error to ‘learn’ how to get to the target. See, this stuff isn’t so hard after all!

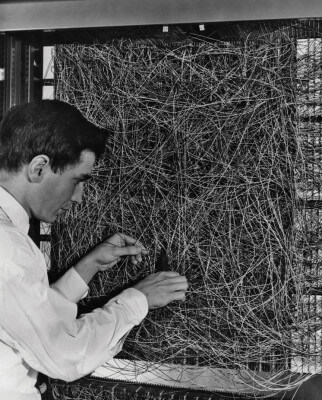

This “learning by trial-and-error” idea can be represented abstractly in something that we’ve all heard of — a neural network.

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

From Text to Value: Pairing Text Analytics and Generative AI

21 May 2024

5 PM CET – 6 PM CET

Read MoreCategories

You Might Be Interested In

The Future of Business Intelligence is Open Source

12 Mar, 2021While “software is [still actively] eating the world”, it’s also clear that open source is taking over software. Simply put, …

It’s Not Clairvoyance, It’s Data Science

28 Apr, 2017We’re in a fierce era of ever-increasing competition for great, reliable data. Around a decade ago, at the start of …

The Most Important Skill in Data Science: Mining and Visualizing your Data

4 Dec, 2016While data scientists have many resources in their tool belt, our research shows that proficiency with data mining and visualization …

Recent Jobs

Do You Want to Share Your Story?

Bring your insights on Data, Visualization, Innovation or Business Agility to our community. Let them learn from your experience.

Privacy Overview

Get the 3 STEPS

To Drive Analytics Adoption

And manage change