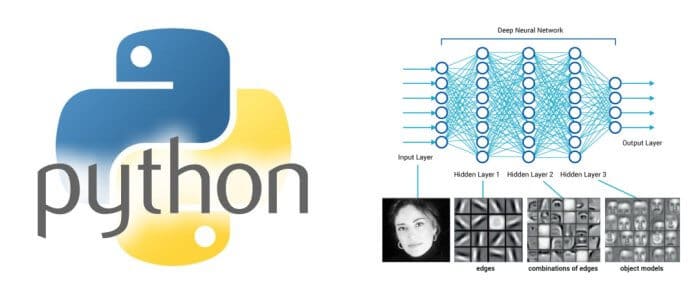

An Overview of Python Deep Learning Frameworks

- by 7wData

I recently stumbled across an old Data Science Stack Exchange answer of mine on the topic of the “Best Python library for neural networks”, and it struck me how much the Python deep learning ecosystem has evolved over the course of the past 2.5 years. The library I recommended in July 2014, , is no longer actively developed or maintained, but a whole host of deep learning libraries have sprung up to take its place. Each has its own strengths and weaknesses. We’ve used most of the technologies on this list in production or development at indico, but for the few that we haven’t, I’ll pull from the experiences of others to help give a clear, comprehensive picture of the Python deep learning ecosystem of 2017.

In particular, we’ll be looking at:

Theano is a Python library that allows you to define, optimize, and evaluate mathematical expressions involving multi-dimensional arrays efficiently. It works with GPUs and performs efficient symbolic differentiation.

Theano is the numerical computing workhorse that powers many of the other deep learning frameworks on our list. It was built by Frédéric Bastien and the excellent research team behind the University of Montreal’s lab, MILA. Its API is quite low level, and in order to write effective Theano you need to be quite familiar with the algorithms that are hidden away behind the scenes in other frameworks. Theano is a go-to library if you have substantial academic machine learning expertise, are looking for very fine grained control of your models, or want to implement a novel or unusual model. In general, Theano trades ease of use for flexibility.

Lightweight library for building and training neural networks in Theano.

Since Theano aims first and foremost to be a library for symbolic mathematics, Lasagne offers abstractions on top of Theano that make it more suitable for deep learning. It’s written and maintained primarily by Sander Dieleman, a current DeepMind research scientist. Instead of specifying network models in terms of function relationships between symbolic variables, Lasagne allows users to think at the level, offering building blocks like “Conv2DLayer” and “DropoutLayer” for users to work with. Lasagne requires little sacrifice in terms of flexibility while providing a wealth of common components to help with layer definition, layer initialization, model regularization, model monitoring, and model training.

Similar to Lasagne, Blocks is a shot at adding a layer of abstraction on top of Theano to facilitate cleaner, simpler, more standardized definitions of deep learning models than writing raw Theano. It’s written by the University of Montreal’s lab, MILA — some of the same folks who contributed to the building of Theano and its first high level interface to neural network definitions, the deceased PyLearn2. It’s a bit more flexible than Lasagne at the cost of having a slightly more difficult learning curve to use effectively. Among other things, Blocks has excellent support for recurrent neural network architectures, so it’s worth a look if you’re interested in exploring that genre of model. Alongside TensorFlow, Blocks is the library of choice for many of the APIs we’ve deployed to production at indico.

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

From Text to Value: Pairing Text Analytics and Generative AI

21 May 2024

5 PM CET – 6 PM CET

Read More